Author: Boxu Li at Macaron

Architecture and Model Infrastructure

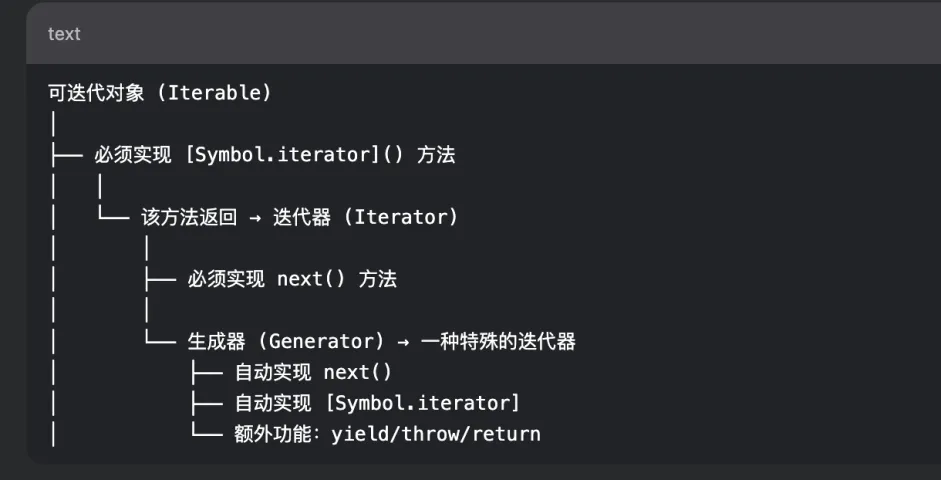

At its core, Gemini Enterprise is built on Google’s most advanced Gemini family of models – the “brains” providing world-class intelligence for every task. These foundation models (e.g. Gemini 2.5 Pro and Gemini 2.5 Flash) represent Google’s state-of-the-art in generative AI, developed by Google DeepMind and trained on multimodal data (text, code, images, audio, video). Gemini models are designed for complex reasoning and rich understanding: for example, Gemini 2.5 Pro can solve challenging problems across diverse inputs and boasts up to a 1 million token context window for long documents. (By comparison, OpenAI’s GPT-4 in many enterprise tools tops out around 128k tokens.) This massive context lets Gemini analyze lengthy contracts, multi-hour transcripts, or entire codebases without breaking them into chunks. Gemini models are inherently multimodal, meaning a single session can process text, images, audio, and more together – a key differentiator from earlier text-only models.

Google’s AI infrastructure provides the backbone for these models. Gemini Enterprise runs on the same reliable, AI-optimized cloud that powers Google Search and YouTube, leveraging NVIDIA GPUs and Google’s custom Tensor Processing Units (TPUs). In fact, Google’s latest TPU generation (code-named Ironwood) delivers a 10× performance boost over its predecessor, enabling fast, scalable inference for Gemini’s large models. This full-stack optimization – from purpose-built hardware up to the AI platform – is central to Google’s approach. As Google Cloud CEO Thomas Kurian notes, true AI transformation requires a complete stack; with Gemini Enterprise, Google controls everything from “TPUs to [its] world-class Gemini models” to the application layer. This tight integration is why nine of the top 10 AI research labs and countless AI startups already use Google’s cloud for generative AI.

On the model level, Google offers multiple Gemini model tiers to balance performance and cost. The “Flash” models (e.g. Gemini 2.5 Flash) prioritize speed and affordability, outputting results at hundreds of tokens per second with minimal latency. They still maintain strong reasoning, with a knowledge cutoff of January 2025 and support for lengthy outputs (up to 65k tokens). The “Pro” models (Gemini 2.5 Pro, etc.) maximize quality and reasoning for the hardest tasks, at the cost of slower throughput. For example, Gemini 2.5 Pro’s outputs excel in complex coding, scientific reasoning, and “needle-in-haystack” knowledge retrieval. It was the top model on the LMArena benchmark for text and vision capabilities for over 6 months. Both Flash and Pro models share the same expansive context limits (≈1M tokens) and multimodal support, so enterprises can choose per use-case: Flash for rapid interactive chats and Pro for in-depth analyses or critical workflows. All Gemini models come with built-in support for advanced prompt features like “thinking” mode (a step-by-step chain-of-thought reasoning process) and tool use (e.g. calling code execution or web search) to boost accuracy. In short, the architecture combines Google’s frontier AI research with a cloud architecture optimized for speed at scale – ensuring even large enterprises can deploy multimodal AI to thousands of employees with high performance.

Six Core Components of the Platform

Beyond the models themselves, Google designed Gemini Enterprise as a layered platform with six core components that work together:

- Foundation Models: As discussed, access to the full Gemini model family, including the latest reasoning-optimized tiers (e.g. Gemini 2.5 Pro), forms the intelligence layer of the system. These models handle the natural language understanding, generation, and reasoning for all queries and agents.

- No-Code Agent Workbench: A visual, no-code/low-code interface allows users (even non-programmers) to build custom AI agents and orchestrate multi-step workflows. Through this Agent Designer workbench, a business analyst or marketer can chain together tasks (e.g. “research → analyze → draft → act”) by simply configuring blocks rather than writing code. This dramatically lowers the barrier to automate processes with AI – “you don’t need to learn Python” to create an agent, as one analyst noted. The workbench provides templates and building blocks to define agent goals, attach data sources, and set up tool usage in a visual flow.

- Pre-Built Agents and Marketplace: To deliver value on day one, Gemini Enterprise includes a gallery of Google-built agents for common enterprise needs. Examples include a “Deep Research” agent that can investigate complex topics across company knowledge, a “Data Science” agent that analyzes datasets for insights, and a customer service agent for handling support queries. In addition, Google launched an Agent Marketplace (partner ecosystem) with thousands of validated third-party agents that organizations can plug in. Partners like Salesforce, Atlassian (Jira/Confluence), GitLab, Shopify, and many others have built specialized agents or integrations listed in this marketplace. This open catalog means enterprises can “discover, filter, and deploy” ready-made agents for various domains, all vetted for security and interoperability. It’s a significant ecosystem play: over 100,000 Google Cloud partners are supporting Gemini Enterprise’s agentic platform, ensuring companies aren’t locked into one vendor’s tools.

- Connectors and Data Integration: An AI agent is only as good as the context and data it can access. Gemini Enterprise provides native connectors to over 100 enterprise data sources and SaaS applications. These adapters securely connect the AI to corporate content “wherever it lives” – be it Google Workspace data (Drive, Gmail, Docs), Microsoft 365 data (SharePoint, Teams, Outlook), or business apps like Salesforce, SAP, ServiceNow, Jira, Confluence, databases, etc.. The platform can federate queries across multiple sources and enforce each system’s permission controls so that results are “permissions-aware”. Under the hood, Gemini Enterprise uses Vertex AI Search indexing for unified search across structured and unstructured content, with options to either federate queries in real-time or ingest data into an index for faster retrieval. Enterprises can choose per source: e.g. live federation for frequently updated systems, or scheduled ingestion for static repositories. The result is an enterprise knowledge graph spanning siloed systems. In practice, this means an employee can ask Gemini Enterprise a question and it will retrieve facts from SharePoint, Salesforce, email threads, and database records, then synthesize an answer grounded in those sources. This powerful intranet search capability is one of the platform’s biggest selling points – it transforms previously “trapped” institutional knowledge into accessible answers.

- Centralized Governance and Security: All of these agents and data hookups are managed under a unified governance framework. Administrators get a central console to visualize, secure, and audit every agent and data connection in the organization. Fine-grained access controls can be set so that agents only have the least-privilege access needed for their tasks (preventing an HR bot from pulling finance data, for example). Audit logs are captured for all agent actions and user prompts, and can be exported or monitored in real-time. Google also provides tools to label and classify sensitive data (via integration with DLP APIs and data catalogs), so that Gemini will handle things like PII or PHI appropriately. In short, governance is “first-class” in the platform – a response to enterprise concerns around uncontrolled AI. Google even offers “Model Armor”, a managed service that screens prompts and responses for security/privacy risks (like prompt injections or data leakage) before they reach the model. These safety layers add defensive guardrails around the LLM. We’ll discuss security and compliance in detail later, but suffice it to say the architecture isn’t just a model and an API – it’s “enterprise-grade” with built-in admin controls, compliance hooks, and auditing at every layer.

- Open Ecosystem and Extensibility: Finally, Gemini Enterprise is built on a principle of openness and extensibility. It works across multi-cloud and hybrid environments (including support for Google Distributed Cloud deployments on-premises or at the edge for sensitive data). Google emphasizes that Gemini can operate “seamlessly in Microsoft 365 and SharePoint environments”, not only Google’s own apps. The platform supports emerging open standards – e.g. Google collaborated on an Agent Communication Protocol (Agent2Agent) so that agents from different vendors or clouds can talk to each other, and an Agent API standard (Model Context Protocol) for sharing context between systems. For developers, Google open-sourced the Gemini CLI and its extension framework so that anyone can build plugins integrating Gemini into their tools. This open approach is strategic: Google knows enterprise AI success will require broad integration, so it’s positioning Gemini Enterprise as “the AI fabric” that can weave together many apps and cloud services. With 100k+ partners and cross-platform protocols, the ecosystem is a key part of the architecture – not an afterthought.

Bringing these layers together, Gemini Enterprise provides a single secure interface (chat and agent hub) where employees can access all capabilities. They can ask a question in natural language and get a grounded answer with citations, or invoke a custom agent to execute a multi-step workflow. Behind the scenes, the request flows through the components above: the relevant connectors fetch data, the Gemini model analyzes and responds, and any agent actions are orchestrated with governance checks. Google calls Gemini Enterprise the “new front door for AI in the workplace” because it intends to be the entry point to all AI-powered tasks in an organization. Rather than AI being scattered in silos (one tool for code, another for support, etc.), Google’s vision is one platform that “moves beyond simple tasks to automate entire workflows” securely and at scale. In summary, the architecture blends cutting-edge AI models with enterprise integration and control, enabling true organization-wide AI adoption.

Deployment Options: Vertex AI, Workspace, and Connectors

Gemini Enterprise is flexible in how and where it can be deployed. Google offers multiple pathways to bring its generative AI into an enterprise environment – whether via Google Cloud, within Google Workspace apps, or even integrated into third-party products through connectors.

- Google Cloud Vertex AI (Managed Cloud Deployment): For organizations building custom applications or wanting tight control, Vertex AI provides Gemini models as a service. The Vertex AI Gemini API exposes Gemini (and other Google foundation models) via Google Cloud’s platform, letting developers call the models with enterprise-grade controls (service accounts, IAM permissions, etc.). This option is ideal if you want to embed Gemini’s capabilities into your own app or backend. It comes with the full Google Cloud ecosystem – logging/monitoring, usage quotas, on-demand scaling, and integration with tools like the Vertex AI RAG Engine for Retrieval-Augmented Generation. Enterprises can choose different regional endpoints (US, EU, Asia) for data residency when using Vertex AI. Notably, Google allows hybrid and on-prem deployment for Gemini models via its Google Distributed Cloud (for customers with strict data sovereignty). In partnership with hardware vendors (like NVIDIA’s Blackwell GPUs), Google can effectively install the Gemini serving stack in an organization’s own data center or a secured edge location. This is a significant differentiator – while the default is cloud, regulated industries (government, finance, healthcare) can opt for an air-gapped Gemini Enterprise instance under their control.

- Google Workspace with Gemini (Native in Productivity Apps): Google is also directly embedding Gemini’s AI assistance into Google Workspace applications (Docs, Sheets, Slides, Gmail, Meet, etc.), bringing generative AI to end-users without any coding. If an organization uses Google Workspace, many Gemini features are available through the UI that users already know. For example, in Google Docs and Gmail, users can invoke “Help me write” powered by Gemini to draft content or refine text. In Google Slides, they can use “Help me design” to generate custom images via the Imagen model (Gemini’s image generation). In Sheets, Gemini can create smart tables or autofill columns using AI inference. Google Meet integrates Gemini for real-time features like translating speech in subtitles while preserving the speaker’s tone, enhancing video quality, and even a “take notes for me” assistant that generates meeting notes automatically. All these features are part of Google Workspace with Gemini, which Google has rolled out across its enterprise tiers. From an admin perspective, the Gemini app can be enabled or disabled as a core service in Workspaceworkspaceupdates.googleblog.com. Data from Workspace stays within that environment – e.g. if Gemini summarizes a company Drive document for a user, it respects sharing permissions and does not expose the content to others without access. Google has branded these AI enhancements under “Duet AI” in marketing, but under the hood it is the Gemini model doing the heavy lifting. This deep integration into daily productivity tools positions Gemini Enterprise against Microsoft’s Office 365 Copilot (more on that in the use-case blog). It means users can get AI help directly in the flow of work – writing emails, analyzing spreadsheets, creating presentations – rather than needing a separate app.

- Gemini Enterprise “App” and Third-Party Connectors: Google also offers Gemini Enterprise as a standalone web application (chat interface plus admin console) for those who want a one-stop intranet AI assistant. Employees can go to this app and chat with Gemini to ask questions, generate content, or execute tasks – essentially a company’s private ChatGPT-like bot grounded in its own data. This Gemini Enterprise app connects to internal data through the aforementioned pre-built connectors for tools like Confluence, Jira, SharePoint, ServiceNow, etc.. The connectors continuously sync content (with options for full or incremental sync schedules) into Gemini’s searchable indexcloud.google.com. The result is an intelligent intranet search on steroids: employees can query everything from policies in Confluence to tickets in Jira or files on a network drive, all from one chat box. Crucially, Gemini Enterprise respects each user’s access rights – it will only retrieve and reveal content the querying user is allowed to see, thanks to integration with identity and ACL systems. In addition, the platform supports connectors to external knowledge – for example, a built-in Google Search grounding tool can pull in up-to-the-minute public web information when appropriate. This can be useful for questions that mix internal and external context (e.g. “Compare our Q3 financial growth to industry benchmarks” – where industry data might be fetched via Google Search). The standalone Gemini Enterprise app can be deployed via the Google Cloud Console (for admins) and then accessed by users in a browser. It effectively becomes the unified AI assistant for the company, replacing the need for separate chatbots for each department. Google has seen early customers use it for diverse scenarios – from a nursing assistant that summarizes patient handoff notes (at HCA Healthcare) to a retail support bot that helps customers self-serve (at Best Buy).

- Developer API (Google AI for Developers): As a complement to Vertex AI, Google launched a simpler Gemini Developer API via its Google AI developer services. This API provides a straightforward, hosted endpoint for Gemini models without requiring a full Google Cloud project. It’s aimed at rapid prototyping and less complex use cases – “the fastest path to build and scale Gemini-powered applications,” according to Google. Most capabilities are similar between the Developer API and Vertex AI, and Google now offers a unified Gen AI SDK (

google-genai) that can call either backend with minimal code changes. Essentially, an organization can start building with the developer API (which uses API keys for auth) and later migrate to Vertex AI if they need more enterprise controls or want to integrate with other GCP services. For enterprises, the Vertex route is usually preferred for production (due to VPC network integration, user-managed keys, etc.), but the developer API is a handy option for initial trials or for SaaS providers that want to quickly embed Gemini (similar to how one might use OpenAI’s API).

In summary, Google meets enterprises where they are: If you want a turnkey AI assistant for employees, enable the Gemini app (and Workspace features). If you want APIs to integrate AI into your own apps, use Vertex AI or the developer API. If you need hybrid or on-prem due to regulatory reasons, Google offers that via distributed cloud. And thanks to broad connector support, Gemini Enterprise can even sit atop non-Google ecosystems (e.g. a company that primarily uses Microsoft 365 can still deploy Gemini Enterprise as an overlay assistant connected into SharePoint, Outlook, etc.). This flexibility in deployment is a key aspect of Google’s go-to-market – it recognizes that large customers have heterogeneous IT landscapes and varying risk appetites for cloud. Notably, Google Workspace customers get many Gemini features included in their existing subscriptions (especially if they have the Gemini Enterprise or Ultra add-on), which can accelerate adoption via tools employees already use daily.

Gemini APIs and Customization Mechanisms

While Gemini Enterprise provides no-code tools for business users, it also offers robust APIs and customization options for developers and IT teams to tailor the AI to their organization’s needs. Let’s break down how one can customize Gemini’s behavior and extend its functionality:

Unified GenAI SDK and APIs: Google provides a unified SDK (google-genai library) that allows developers to call Gemini models in various environments (cloud or local) with consistent methods. Whether you’re using the Vertex AI endpoint or the direct Developer API, the SDK handles authentication and endpoints – you simply specify the model (e.g. "gemini-2.0-flash" or "gemini-2.5-pro") and send a prompt. This is similar to OpenAI’s approach, making it easy for teams already familiar with GPT-style APIs to adopt Gemini. In fact, Google’s SDK even includes an OpenAI compatibility layer to simplify porting code. Responses from Gemini come with rich structure (token usage, model metadata, etc.), and the API supports both “completion” style prompts and chat (messages with roles). Importantly, the SDK and API support special modes like long context handling (enabling those million-token inputs via batch file uploads) and streaming (to get token-by-token output for real-time apps).

Prompt Customization – System Instructions and Grounding: To customize model behavior without retraining, Gemini supports system-level instructions and grounding data. Much like OpenAI’s system message, **developers can provide a “system prompt” that biases the model’s persona or rules for the conversation. For instance, an enterprise can set a persistent system instruction like “You are an assistant for ACME Corp. You always answer according to ACME’s policies and knowledge base. If you don’t know an answer, say so.” This ensures consistency and adherence to company guidelines across all chats. On the grounding side, Google enables Retrieval-Augmented Generation (RAG) both via the platform’s built-in search index and through standalone tools. In Vertex AI, there is a managed RAG Engine that orchestrates retrieving relevant documents (from BigQuery, Cloud Storage, etc.) and feeding them into the prompt. In practice, when a user asks a question, the system can attach top relevant snippets from enterprise data to the model’s context, thereby “grounding” the response in real facts. Gemini Enterprise’s chat interface does this behind the scenes for many queries, returning answers with citations linking back to source documents. Developers integrating Gemini into other apps can replicate this by using the Vertex RAG API or their own retrieval pipeline (e.g. using vector embeddings – note that Gemini offers an embeddings model as well for semantic search). Additionally, Gemini has a built-in tool for live web search grounding – it can call Google Search to fetch up-to-date info on the fly. This is useful for questions about recent events or statistics not in the training data (which has a January 2025 knowledge cutoff for Gemini 2.5). Grounding and retrieval mechanisms are key customization tools – they allow enterprises to inject proprietary knowledge into the model’s answers without altering the model weights, and to get traceable outputs with source references for compliance.

Fine-Tuning and Prompt Tuning: For organizations that require the model to adopt a specific style or incorporate additional training data, Google supports model tuning on Gemini (currently in controlled availability). In Vertex AI, teams can perform supervised fine-tuning on Gemini models using their own labeled examples. For instance, a company might fine-tune a Gemini variant on its past customer support transcripts so that the model learns domain-specific QA pairs and jargon. Google recommends techniques like LoRA (Low-Rank Adaptation) for efficient fine-tuning of these large models. LoRA allows adding new knowledge or style with a relatively small number of additional parameters, avoiding the need to retrain the entire huge model. Developers prepare training data (prompt and ideal completion pairs) and use Vertex’s tuning service to produce a custom checkpoint. This tuned model can then be hosted and used via the API (noting that some largest models might not support fine-tune in all regions yet). In addition to full supervised fine-tuning, Google supports prompt tuning – essentially learning an optimal prefix prompt that guides the model, without changing model weights. This can achieve some of the benefits of fine-tuning (e.g. consistently following a desired format or policy) at lower risk. Moreover, function calling is available: developers can define “tools” or functions (e.g. an API to book a meeting room) that Gemini can invoke when appropriate in a conversation. This is similar to OpenAI’s function calling mechanism. It enables extending Gemini’s capabilities by having it call external functions with generated parameters – effectively letting the AI perform actions like looking up database info, triggering workflows, etc., in a controlled way. For example, one could integrate a “Create JIRA Ticket” function; when a user asks the assistant to log an IT issue, Gemini can populate and execute that function.

Agent Orchestration and Developer Tools: On top of raw model calls, Google provides an Agent orchestration framework (Agentspace, now part of Gemini Enterprise) for building multi-step agents that use the model plus tools. Developers can write agent scripts or use the Agent Designer UI to specify how an agent should handle a task – e.g., “Step 1: search the knowledge base. Step 2: summarize findings. Step 3: ask user for clarification if needed. Step 4: draft an output.” The agent runtime handles looping through these steps, invoking the Gemini model or tools at each step, and managing the state (this is analogous to an LangChain-like chain, but on Google’s managed platform). Google’s Agent Development Kit (ADK) provides libraries and patterns to create such orchestrations, and Google is aligning it with open frameworks (for instance, it has examples with LangChain integration).

For coding tasks, Google offers Gemini Code Assist (an evolution of its earlier Codey models) for AI coding suggestions in IDEs. And for command-line enthusiasts, the aforementioned Gemini CLI is a powerful developer companion: it lets devs chat with Gemini from their terminal to generate code, explain errors, manipulate cloud resources, etc.. With the new CLI Extensions, developers can even plug Gemini into their devops workflows – e.g. an extension might allow Gemini to fetch cloud logs or run a test suite when asked. Major devtool companies like Atlassian, MongoDB, Postman, Stripe and others have built CLI extensions so that Gemini can interface with their services from the command line. This effectively turns the CLI into a “personalized command center” for developers, powered by AI.

Lastly, integration SDKs are available for various languages (Python, JavaScript, Go) so developers can embed Gemini into their applications. And with support for MCP (Model Context Protocol) and emerging standards, integrating Gemini alongside other AI systems or agents is easier. Google is also working on standards for agent transactions – e.g. an Agent Payment Protocol (AP2) for secure financial actions by agents – hinting at future capabilities where AI agents can complete tasks like purchasing or data entry in a governed way.

In summary, Gemini Enterprise is highly customizable: whether through prompt engineering, grounding with your data, lightweight tuning, or building complex agents with tools, enterprises have many knobs to align the AI to their specific workflows. Google provides not just the models, but the plumbing to inject context and integrate actions, which is crucial for real business use (where pure end-to-end AI often isn’t enough – you need it connected to databases, APIs, and policies). By offering these customization mechanisms, Google enables enterprises to create very domain-specific AI assistants (for example, a “Regulatory Compliance Analyst” bot or a “SAP Finance Query” bot) that still benefit from the general intelligence of the Gemini model. And all of this can be done while keeping the base model securely sandboxed – inputs and outputs can be filtered and audited, and proprietary data used in prompts is not used to retrain Google’s models without permissionsupport.google.com.

Security, Governance, and Compliance Framework

For enterprise adoption, trust is as important as raw capability. Google has engineered Gemini Enterprise with extensive security and compliance measures, aiming to meet the strict requirements of corporate IT. Let’s break down how data is protected and what certifications/trust features are in place:

Data Privacy and Isolation: Google emphasizes that customer data is not used to train Gemini’s foundation models and is not visible to other customers. In Google Workspace’s implementation, any content a user submits to Gemini (e.g. a document to summarize) is not used to improve the model and “is not reviewed by humans,” providing assurance of privacysupport.google.com. In Google Cloud’s Vertex AI terms, Google likewise offers data isolation commitments – data stays within the customer’s tenant and is only used to generate the output for that customer. This addresses a common enterprise concern about generative AI: companies don’t want their sensitive prompts or outputs feeding a vendor’s model updates. Google’s approach here is similar to Microsoft’s Copilot (which also pledges not to use customer Office 365 data to train). In addition, all data exchanges are encrypted (in transit and at rest). By default, content indexed by Gemini Enterprise’s connectors is stored encrypted with Google-managed keys, but customers can opt for Customer-Managed Encryption Keys (CMEK) for added control. CMEK support is available when using US or EU regional endpoints for the Gemini APIs. Some customers even integrate External Key Managers/HSMs so that Google’s servers must request decryption from the customer’s system, giving an extra layer of key custody.

Access Control and SSO: Gemini Enterprise ties into enterprise Single Sign-On (SSO) and identity systems, so that user authentication is consistent with the company’s existing access policies. It leverages Google Cloud Identity or federated SAML/OAuth logins, meaning users log in with their corporate credentials. Once authenticated, every query or agent action is attributed to a user identity for auditing. The platform enforces the user’s permissions when retrieving any data – e.g. if Jane Doe asks the assistant to find “project Foo status,” and that info lives in a Drive folder or Confluence space she doesn’t have access to, Gemini will not include it in the answer. This permission-aware response mechanism prevents data leakage across departments. Administrators can further set role-based policies on what agents a given group can use or what connectors are enabled. For instance, an admin could disable the use of a “Twitter posting agent” for most users, or require that only HR staff can query the HR data store. Additionally, Google’s Access Transparency logs (a Google Cloud feature) can be enabled – this provides an immutable log of any access that Google administrators or automated processes had to your content, enhancing trust in Google’s operations.

Model Output Safety: To handle the well-known risks of LLMs (like hallucinations or inappropriate content), Gemini Enterprise uses multi-layered safeguards. Model Armor, as mentioned, is a cloud service that does prompt and response scanning for security issues (malicious instructions, data exfiltration attempts, etc.). It can redact or block certain inputs/outputs in real-time before they cause harm. Google also allows admins to configure content moderation settings for Gemini – e.g. defining what the AI should do if a prompt requests disallowed content. These settings align with Google’s AI safety policies (to prevent hate speech, self-harm advice, etc.). There is a “safety guidance” system and toxicity filters by default. However, Google warns (and any expert knows) that no AI is 100% hallucination-free. They encourage implementing validation steps for critical use cases. For example, if an agent is set to execute autonomous actions like sending an email or approving an invoice, it’s wise to use human-in-the-loop review or at least a test run. Enterprises are advised to establish “guardrail” policies: e.g. requiring certain agent-generated outputs to be approved by a manager before applying, or preventing the AI from giving financial advice outright. The platform supports these controls (for instance, an admin could disable code execution tools globally, or require that the finance agent runs in “proposal mode” only). Logging of all AI actions also ensures any incidents can be traced and analyzed. Google has also built a feedback loop – users can thumbs-up/down answers in the interface, and these signals help improve the relevance (either via fine-tuning or search tuning) over time.

Compliance Certifications: Google has worked to align Gemini Enterprise with major compliance standards. Since the platform builds on Google Cloud and Workspace foundations, it inherits many of Google’s existing certifications. As of late 2024, Google announced that the Gemini app (web and mobile) attained HIPAA compliance and achieved certifications for ISO/IEC 27001, 27017, 27018, 27701 (information security and cloud privacy standards), as well as ISO 9001 (quality management) and ISO 42001 – the new AI Management System standard. In fact, Google noted Gemini was the first productivity AI offering to be certified on ISO 42001, indicating it has been audited for responsible AI development and risk management. Additionally, the Gemini service is SOC 2 and SOC 3 compliant (audited for security, availability, confidentiality controls). For U.S. public sector customers, Google in late 2024 submitted Gemini for FedRAMP High authorization – meaning it’s on the path to being cleared for use with government data up to highly sensitive level. While FedRAMP authorization may be pending, Google’s infrastructure it runs on is FedRAMP certified, and they plan to include Gemini Enterprise in future audits. In Google Cloud documentation, it’s stated that Gemini Enterprise will be included in upcoming certification audits since it uses the same underlying controls as other Google Cloud services. For healthcare clients, HIPAA support is crucial – Google confirms that Workspace with Gemini can support HIPAA-regulated workloads (with the proper Business Associate Agreement in place). In short, the platform is aligning with the compliance checkboxes (ISO, SOC, HIPAA, GDPR, etc.) that enterprises and regulated industries require. Enterprises should still review the specifics (for instance, at launch one document noted that Gemini in Chrome browser wasn’t yet FedRAMP compliant), but the trajectory is that Gemini Enterprise will meet or exceed the compliance posture of Google’s cloud in general.

Geographical Data Controls: Gemini Enterprise allows data residency choices – admins can choose to store indexed data in US or EU multi-regional locations to satisfy data locality requirements. The model processing can also be configured (e.g. EU users’ queries served in EU data centers) depending on the region selections. This is important for GDPR compliance. Also, VPC Service Controls can be used to fence the Gemini API so that it only accepts traffic from the company’s private cloud networks, mitigating data exfiltration risks. And Access Transparency logs, as mentioned, can give visibility into Google’s own access to data (which is usually zero, aside from automated systems).

Governance Best Practices: Google provides guidance to customers on setting up an AI governance board, pilot phases, and risk assessments when rolling out Gemini. They advise a staged rollout: sandbox testing, then limited workflows with human oversight, then scaled deployment with monitoring. They also highlight the importance of change management – e.g. having a policy for how to handle model updates (since foundation models might be updated by Google with new versions) and how to re-validate critical prompts or agents when that happens. Vendor lock-in is another risk they mention – while Google pledges openness, an organization should ensure they can export their agent configurations and prompt libraries should they ever need to migrate. Google’s use of open standards (like Agent2Agent) is partly to ease such transitions, but it’s still wise for enterprises to negotiate contractual rights to their prompt and agent data. On the flip side, Google’s very deep integration across cloud, workspace, and data means that a lot of value is realized if you fully adopt the stack – which can make switching out more difficult later (a classic ecosystem lock-in scenario, not unique to Google).

In essence, Google has put significant thought into earning enterprise trust: Gemini Enterprise comes with a “comprehensive set of privacy and security certifications” and controls, and it is designed for admin oversight and data protection from day one. Early enterprise testers (like banks and healthcare orgs) have validated these features in pilots, which is why we’re seeing case studies like Banco BV and HCA Healthcare comfortable putting AI into core workflows. Of course, adopting generative AI still requires responsible use – companies should enforce their own policies (Google’s tools help but can’t ensure, for example, that an employee won’t share something sensitive in a prompt). But compared to the wild west of consumer AI chatbots, Gemini Enterprise provides a controlled, auditable environment where enterprise data can be harnessed safely. As Google succinctly puts it, it delivers “built-in trust” features to make organizations confident in deploying AI.

Developer Tooling and Integration

Gemini Enterprise is as much a developer platform as it is an end-user product. Google has released a rich set of tools, SDKs, and integration options to help developers and IT teams build on Gemini and integrate it into various systems. We’ve touched on some (SDKs, CLI, etc.), but let’s summarize the key developer tooling:

- Google Gen AI SDKs (APIs in multiple languages): Official libraries for Python, JavaScript/TypeScript, Go, and more let developers call Gemini models with just a few lines of code. These handle tokenization, streaming, and error handling. There’s also a REST API and gRPC interface for those who prefer direct calls. The API reference includes examples for content generation, chat, embeddings, and even specialized endpoints (e.g. an image generation endpoint for the Imagen model, a speech-to-text endpoint, etc.)ai.google.devai.google.dev. Moreover, Google provides a Cookbook on GitHub with ready-made examples and prompt designs for common tasks (summarization, Q&A, classification, etc.), which developers can adapt.

- Templates and Solution Accelerators: Google Cloud has published AI solution blueprints (via their Architecture Center and GitHub) that show how to combine Gemini with other GCP services. For example, a reference architecture for an “AI-powered support chatbot” might include Vertex AI (Gemini) + Cloud Search + Dialogflow CX for voice, etc. Google’s partners (like SADA, Deloitte, Accenture) also provide templates – e.g. a pre-configured agent for call center automation or a “sales coach” agent that integrates with CRM data. These templates give developers a starting point, which they can then customize in the Agent Designer or via code.

- Agent Orchestration and Workflow Tools: Google’s Agentspace framework (now part of Gemini Enterprise) includes both a visual builder and libraries to manage agent workflows. Developers can define custom agent “skills” that involve sequences of prompts, tool calls, and decisions. For instance, an agent skill might be: “If user asks a question, first search the knowledge base (tool call), then feed results + question to Gemini model (prompt), then if confidence is low, escalate to human.” These can be configured declaratively. Google’s goal is to make orchestrating complex AI behaviors easier than piecing together Python scripts. The platform handles context tracking between steps (with those million-token windows, the entire intermediate context can be passed along). This is effectively Google’s answer to frameworks like LangChain/Chain of Thought – but offered as a managed cloud service. It’s worth noting Google is working with the community (LangChain integration is documented, and the Agent2Agent protocol and Model Context Protocol are being co-developed with input from others).

- Gemini CLI and Extensions: We covered the CLI from a customization perspective, but from a tooling view: The Gemini CLI (an open-source tool on geminicli.com) lets developers chat with the Gemini model in their terminal and automate dev tasks. Google reported over one million developers tried it within 3 months of launch – it’s become quite popular for quick code help or cloud management via natural language. With CLI Extensions, a developer can integrate any service or API to respond to custom commands. For example, Atlassian built a CLI extension so that a developer can type, “

@jira create bug ticket for failing login test” and the Gemini CLI will use Atlassian’s extension to actually create the JIRA issue after confirming details. This shows how Gemini acts as the glue between natural language intent and real developer actions. Companies can create their own internal CLI extensions too – e.g. one that knows how to spin up a standard dev environment or fetch specific internal metrics when asked. All these extensions run locally or in the user’s environment, ensuring security (no secrets get sent to the model; rather the model’s output triggers the local extension logic). - Integrations in IDEs and Apps: Google is integrating Gemini into various interfaces. For example, Cloud Shell (Google Cloud’s online terminal) has an AI assistant panel using Gemini to help with command suggestions, code fixes, etc.. There are plugins for VS Code and JetBrains IDEs bringing “Copilot-like” code completion and chat (under the name “Duet AI for Cloud”). In Google Sheets, an AppSheet integration allows creating AI-driven apps (AppSheet can use Gemini to parse unstructured data or generate formulas on the fly). There’s also Apigee integration – Google’s API management tool can embed Model Armor and Gemini calls in API workflows, meaning developers can put an AI check or response generation step in front of any API. Essentially, Google is weaving Gemini into many corners of its ecosystem, giving developers options to hook in at whichever point is most useful.

- Monitoring and Debugging Tools: Vertex AI provides real-time monitoring of model usage – developers can see how many tokens each request used, latency, and any errors. The logs will even capture the prompts (if opted in), which can be crucial for debugging why an agent responded a certain way. There are tools for evaluating prompt quality and doing A/B testing of different prompt versions. Google has also published a “Prompt Engineering” guide and best practices in its docs, and even integrated some prompt optimization features (like prompt context caching to reuse token allotment efficiently, and token counting utilities to ensure a prompt stays within limits)ai.google.dev.

- Community and Support: Google has a community forum (discuss.ai.google.dev) and programs like Google Cloud Innovators specifically for AI developers. They have also launched the Google Skills Boost platform with free training on Gemini Enterprise and AI development. The GEAR (Gemini Enterprise Agent Ready) program is an educational sprint to certify developers in building AI agents, with a goal to train one million developers on Gemini tools. This is analogous to what Microsoft did with Power Platform certifications – Google is trying to cultivate a skilled community around its AI platform. For enterprise support, Gemini Enterprise customers have access to Google Cloud’s support plans, and Google is also establishing an elite “Delta” team (AI experts) that can embed with customer teams for complex deployments.

All these developer tools and programs signal that Google views Gemini Enterprise not just as a static product, but as a living platform that developers will extend and co-create on. For a product lead or enterprise tech decision-maker, this means investing in Gemini Enterprise is not just getting a chatbot – it’s getting a foundation for custom AI development, backed by Google. The platform can tie into your CI/CD pipeline, your data lakes, your workflow engines, etc., thanks to the integration points. This is quite important strategically: it can help future-proof an organization’s AI efforts. Instead of one-off AI pilots here and there, Google’s pushing for a unified platform where all those experiments can converge, share resources (and compliance guardrails), and be centrally managed.

Conclusion

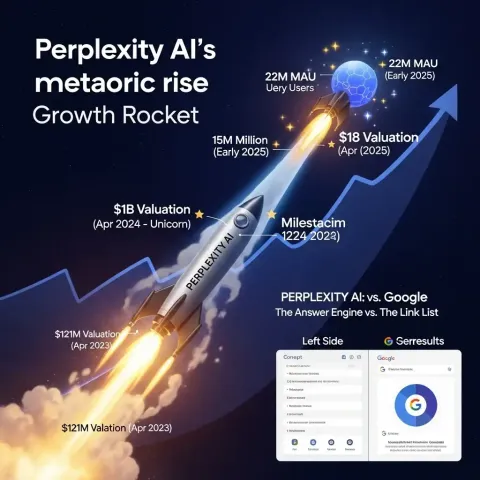

In this technical deep dive, we’ve seen that Gemini Enterprise is far more than an LLM API. It is a comprehensive enterprise AI platform that marries cutting-edge models (the Gemini family) with the practical infrastructure needed in large organizations – data connectors, deployment flexibility, robust security, and rich customization. Architecturally, it leverages Google’s full-stack innovation: from custom silicon in data centers, to world-leading multimodal models, up through intuitive tools that let any employee build an AI agent. This vertical integration yields advantages in performance, scale, and reliability (as evidenced by the 1.3 quadrillion monthly token throughput Google is already handling across its AI surfaces).

For deployment, Gemini Enterprise can fit into various IT strategies – whether you are all-in on Google Cloud, a hybrid shop, or even primarily a Microsoft SaaS customer, you can deploy it in a way that complements your environment. Its APIs and SDKs make it a natural addition to any modern application stack, and its Workspace integration means user-facing impact can be immediate (AI in email, documents, meetings, without needing to write a single line of code).

Crucially, Google has baked in enterprise governance at every layer: data stays under corporate control, actions are auditable, and the system can be configured to comply with stringent regulations. The array of certifications and the transparency features (like Access Transparency, CMEK) demonstrate Google’s commitment to meeting enterprise trust requirementscloud.google.com. This has been validated by early adopters in sensitive industries – e.g. healthcare providers trusting it with patient info (under HIPAA), banks using it for analytics, etc., which speaks volumes.

From a developer perspective, Gemini Enterprise provides a rich playground to innovate. Whether through no-code agent design or full-code integrations, developers can bend the platform to solve their unique problems. They can build an agent that spans silos – e.g., reads a CRM, queries a database, and sends an email – all orchestrated with Gemini’s intelligence. And thanks to tools like the Gemini CLI and extension framework, even developer workflows themselves can be optimized by AI (it’s quite meta: AI helping build AI solutions).

In sum, Gemini Enterprise is a bold effort by Google to deliver an integrated AI fabric for the enterprise. Technically, it stands at the intersection of LLM prowess, enterprise search, and workflow automation – areas that used to be separate. By unifying them, Google aims to enable “true business transformation” beyond basic chatbotsblog.google. Of course, no platform is perfect or magic. Success with Gemini will require proper planning (pilots, user training, oversight). But the tools are there to tackle the challenges.

For product leaders and enterprise architects, the takeaway is that Google has assembled a comprehensive toolkit for bringing generative AI into every workflow – with the technical depth (in models and infrastructure) and the enterprise features (in security and customization) needed. In the next blog, we’ll explore how this platform stacks up in real business use cases and against competitors like Microsoft’s Copilot, OpenAI, Anthropic, and others in the strategic landscape. But from an engineering standpoint, Gemini Enterprise is undoubtedly a milestone in enterprise AI platforms, one that encapsulates Google’s AI research and cloud capabilities into a cohesive offering. As Sundar Pichai described it, it’s designed to be “the new front door for AI in the workplace,” bringing the full power of Google’s AI to every employee in a secure, contextual, and scalable way.

Sources:

- Google Cloud – What is Gemini Enterprise?

- Google Cloud – Introducing Gemini Enterprise (Thomas Kurian)

- Google Blog – Gemini Enterprise Announcement (S. Pichai, Oct 2025)

- Reuters – Google launches Gemini Enterprise AI platform

- Google Cloud Docs – Gemini 2.5 Pro Model Card

- TeamAI – Understanding Different Gemini Models

- WindowsForum (Analyst repost) – Gemini Enterprise All-in-One AI Platform

- SADA (Google partner) – 5 Things to Know about Gemini Enterprise

- Google Support – Workspace with Gemini FAQsupport.google.com

- Google Cloud – Compliance and Security (Gemini Enterprise)

- Google Workspace Blog – Gemini app certifications

- iPhone in Canada – Gemini Enterprise aims at Copilot/OpenAI