Author: Boxu Li at Macaron

Introduction:

Microsoft’s latest update to Windows Copilot has quietly but significantly expanded the AI’s reach. In an October 2025 rollout, Copilot gained the ability to connect with Google services – Gmail, Google Drive, Google Calendar, and Contacts – alongside Microsoft’s own Outlook email, OneDrive, and more[1]. This move breaks down longstanding silos between the Microsoft and Google ecosystems. With a simple opt-in, Windows users can now leverage Copilot to search and synthesize personal information across accounts and apps, all through a single AI assistant interface[2][3]. It’s an unprecedented level of cross-platform cooperation: Microsoft’s AI actively reaching into Google’s domain to help users get things done.

In this deep dive, we’ll analyze what these new connectors do and how they function inside Copilot, and contrast Microsoft’s approach with rivals like Google’s Duet AI/Gemini, Notion AI, and Perplexity’s Comet. We will explore the highest-value use cases unlocked – from unified search and email summarization to meeting prep and document creation – and reflect on what this trend means for the future of agentic computing, multimodal interaction, and assistant-driven user experiences. The tone here is practical and strategic, cutting through marketing fluff to give product leaders a clear view of where personal AI assistants are headed.

Copilot’s Gmail, Drive, and Calendar Connectors – How They Work

At its core, Microsoft’s connector update allows Copilot on Windows to serve as a universal search bar and helper across your personal content, regardless of whether that content lives in a Microsoft app or a Google service. Once you enable the connectors (via a toggle in Copilot’s settings), the AI gains permission – with your explicit consent – to access your data in Gmail, Google Drive, Calendar, Contacts, Outlook, and OneDrive[3].

What can Copilot do with this access? In this initial release, the focus is on natural language search and retrieval. You can ask Copilot questions or commands like “What’s the email address for Sarah?” or “Find my school notes from last week”, and Copilot will retrieve the relevant information from whichever connected account holds it[4]. For example, if Sarah’s email is stored in your Google Contacts or an Outlook address book, Copilot will surface that. If your “school notes” are Google Docs in Google Drive (or Word files on OneDrive), Copilot can find those files and present them. The assistant essentially treats your disparate storage and communication silos as one unified knowledge base.

Microsoft’s own demo highlighted how a single query can pull from multiple sources. A user could ask for all invoices from a certain client, and Copilot might check both Outlook and Gmail inboxes to compile matches[5]. Or you might recall saving a PDF to the cloud but not remember where – Copilot can search both OneDrive and Google Drive simultaneously to locate it. All of this happens through the Copilot chat interface on Windows, meaning the user doesn’t have to manually open a browser, launch apps, or run separate searches in Gmail and in Explorer. It’s a frictionless experience once set up.

Importantly, these connections are opt-in and granular. By default, Copilot won’t touch your Gmail or Google data until you link those accounts in settings[6]. You can choose to connect some services and not others (e.g. maybe link Gmail but not Google Drive, or vice versa), so users retain control. Microsoft also limits capabilities to read/search for now – as a guardrail, Copilot isn’t automatically sending emails or adding calendar events via these connectors in this first iteration (it’s reading from your data, not writing to it, except when you explicitly ask it to generate content). This cautious approach is likely intentional to build user trust, given the sensitivity of personal emails and files.

It’s worth noting that Microsoft has paired the connector launch with another new feature: document creation and export via Copilot. Now you can instruct Copilot to spin up a Word document, Excel spreadsheet, PowerPoint deck or PDF from a prompt, and even export the content directly to those formats[7]. For instance, you might ask “Draft a project status update and export to Word,” and Copilot will comply. This complements the connectors: the assistant not only finds information across accounts but can also help you produce new artifacts (emails, docs, etc.) with that information. The long-term vision is an AI that both gathers and generates content seamlessly as your cross-app productivity partner.

Inside the Copilot Experience: Unified Search and Contextual Answers

So what is the user experience like when using Copilot with these connectors? In practical terms, Copilot remains anchored as a sidebar/chat on Windows 11 (summoned with a click or shortcut). The difference is in how it understands your query and composes the answer. When you ask something that involves personal data, Copilot’s AI will securely query the indexes of your connected services. Under the hood, Microsoft likely uses API calls to Google and Microsoft Graph to fetch relevant results, which the AI model then summarizes or directly presents.

In Copilot’s interface, answers that come from your personal data will typically be presented with context. For example, if you ask for a contact’s email address, Copilot might simply display the email (e.g. “Sarah’s email is sarah@example.com”). If you ask for files or notes, Copilot could list a few filenames or snippets with an indication of which service they came from (e.g. “Found Marketing Plan.docx in OneDrive, last modified Sept 5” or “Found Q3 OKRs in Google Drive, modified last week”). Microsoft’s design for Copilot emphasizes transparency, so users know the source – similar to how Bing Chat cites its web sources. Early previews showed source tags like “Gmail” or “OneDrive” next to results, which helps build trust that Copilot isn’t hallucinating but truly found an item in your account.

The value of this unified approach becomes clear the first time you use it: no more mentally indexing “Was that conversation in Gmail or Outlook? Where did I save that PDF?” You just ask Copilot, and it figures out the location for you. It’s essentially an OS-level smart search powered by AI understanding of your query. Windows has long had search indexing, but Copilot takes it to the next level by using natural language and by spanning multiple cloud accounts beyond the local machine.

There are of course limits. Initially, Copilot connectors handle search and simple retrieval; they might not yet support complex multi-step requests (e.g. “Find all emails from my boss about Project Zeus and draft a summary of the key points”). For now you might have to break that into steps: ask Copilot to find the emails, then ask it to summarize them. Over time, we can expect the AI to handle such multi-step agentive queries more fluidly as the integration deepens. Microsoft is likely gathering feedback from this Windows Insider release[8][9] before expanding capabilities further.

Microsoft vs Google vs The Rest: Divergent Strategies for AI Assistants

Microsoft’s cross-platform assistant strategy stands in contrast to those of its peers. By opening Copilot to Google’s domain, Microsoft is signaling that user convenience trumps ecosystem lock-in – a bold play that serves Windows users who rely on Google services. How does this compare to Google’s own AI assistant in Workspace, or to Notion’s and Perplexity’s approaches? Let’s examine the key differences in capabilities, user experience, and platform strategy:

Google Duet AI (Gemini) – Deep Integration, Same Ecosystem

Google’s answer to Copilot is Duet AI for Google Workspace, now evolving with the power of the Gemini model. Duet is an AI collaborator embedded across Gmail, Docs, Drive, Slides, Meet, and more[10][11]. Its capabilities range from helping you draft emails and documents, to generating images in Slides, to summarizing long chats or meeting transcripts. For example, in Gmail you can click the “Help me write” option to have Duet draft a reply, or in Docs ask it to summarize a document. In Slides, Duet can create visuals or build a presentation outline from a prompt[12]. Essentially, Google has woven AI features into each app’s UI: a side panel or menu where Duet can be invoked to assist with the current context.

When it comes to searching across apps, Google has begun enabling some cross-app intelligence, though within its own ecosystem. Google announced plans for Duet AI to “answer complex queries by searching across your messages and files in Gmail and Drive” and to summarize documents in a chat space[13]. In practice, this is surfacing as an enhanced Google Chat experience – you can query the AI in Chat and it can pull info from your Gmail and Drive to answer. For instance, you might ask in Chat, “Summarize the budget proposal Doc that John shared with me and any related emails,” and Duet could retrieve the document from Drive and relevant Gmail threads, providing a consolidated answer. This is conceptually similar to Copilot’s unified search, but limited to Google’s world. Duet won’t reach into, say, your Outlook inbox or OneDrive, since Google’s priority (understandably) is to keep you within Workspace.

From a UX perspective, Google’s approach means the AI is context-aware within each app. Duet appears as a side panel in apps like Gmail and Google Docs (represented by an icon, often a little sparkle or the Duet logo). You might be reading an email and click Duet for options like “Summarize this thread” or “Draft a response.” Or in Google Drive, you could ask Duet to “find files about Project Atlas” which effectively searches Drive. The design is such that the AI feels like a built-in assistant for each specific task, rather than one omnipresent chatbox. The benefit is a tailored experience – Duet knows what app you’re in and provides relevant help (e.g. formatting help in Sheets, slide design in Slides, etc.). The drawback is fragmentation: the user interacts with Duet in slices, rather than having a single place to converse with the AI about anything.

Strategically, Google is leveraging Duet (and the upcoming Gemini model behind it) to reinforce the Workspace value proposition. It’s a premium add-on (about $30 per user for enterprises) that directly competes with Microsoft 365 Copilot pricing[14]. Google’s platform strategy remains one of ecosystem containment – the AI is a reason to use Google’s apps more, and there’s no indication Google will let its assistant natively touch Microsoft services the way Microsoft is embracing Google’s. In short, Google is saying: “Keep your data in Workspace, and our AI will be your expert assistant.” This resonates for companies already Google-native, but it means users in mixed environments (Google for some things, Microsoft for others) don’t get much help bridging the gap – that’s exactly the gap Microsoft aims to fill with Copilot on Windows.

It’s also worth noting Google’s emphasis on AI model strength and modality. Gemini, Google’s advanced generative AI, is touted to bring multimodal capabilities (vision, text, etc.) and improved reasoning. We might soon see Duet handle images or charts more intelligently, or integrate with Google’s search prowess to provide real-time info. By embedding a powerful model across its platform, Google could deliver an experience where the AI feels like a knowledgeable colleague who has read all your docs and emails and also knows the web. But again, it stops at Google’s boundary – for broader agentic behavior spanning third-party apps, Google’s strategy so far is to integrate popular third-party into Google’s apps (e.g. smart canvas chips for apps like Asana or Trello in Docs/Chat[15]), rather than letting the AI roam outside.

Notion AI – The Unified Workspace Assistant

Notion, the all-in-one workspace app, has also stepped into the AI arena with a unique angle. Notion AI is built to be your assistant within Notion, but notably, Notion has introduced AI Connectors that bring outside data into its AI’s purview[16][17]. In other words, Notion wants to be “a single place to find the information you need — even if it lives outside your workspace”[16]. Connectors for Notion AI (currently in beta for Business/Enterprise users) allow linking tools like Slack, Google Drive, Jira, Github, and even Gmail to Notion’s AI[18][19]. Once linked, you can ask Notion’s AI questions in natural language and it will surface relevant information from those connected sources with citations[17]. For example, you could ask within Notion, “What were the action items from my team’s Slack discussion yesterday?” and the AI might retrieve and summarize messages from the Slack channel, citing the specific messages. Or “Do we have a Google Doc outlining the Q4 roadmap?” and it can pull a snippet from that Drive file.

The capabilities of Notion’s AI connectors emphasize search and summarization – much like Microsoft’s Copilot connectors – but focused on knowledge work. Notion explicitly notes that connectors are best for “finding and summarizing information,” and not for heavy data analysis or executing complex transformations[20]. The assistant can aggregate info from multiple sources in one answer (with some limits on how much it can handle at once). It’s essentially doing a RAG (Retrieval-Augmented Generation) approach: find relevant content from Slack, Google Drive, etc., and use an LLM to formulate an answer, complete with references. This is immensely useful for enterprise knowledge management – employees can query a Notion AI chat and get answers drawn from across their documentation and communication silos.

From a UX standpoint, Notion AI lives inside the Notion application as a chat popup or a sidebar widget (the “friendly face with wavy eyebrows” icon in the corner)[21]. It’s available wherever you are in your Notion workspace. A key difference is that Notion’s assistant is context-aware of your Notion pages and can also take actions within Notion (like editing content or creating summaries of the current page). Notion recently announced an “AI Agent” concept in Notion 3.0 that can even automate tasks like a little worker bee (for instance, an Agent that can run for 20 minutes unsupervised to perform a series of actions in your workspace)[22]. This hints at a more autonomous agent vision, though in controlled scenarios.

Notion’s platform strategy by adding connectors is to increase its gravity as the hub for work. If all your info – even from other apps – can be accessed via Notion AI, it strengthens the case to live in Notion and treat it as a mission control. Unlike Microsoft and Google, Notion is not an OS or an email provider or storage service (apart from what users put into it), so it’s cleverly compensating by pulling others’ data in. One limitation: Notion’s connectors have some latency and scope constraints – for instance, it may take time to ingest external content (they mention it can take hours to index large amounts of data)[23], and typically only the last year’s content might be accessible[24]. Also, Notion requires a higher-tier plan to use most connectors, meaning it’s aimed at serious business use cases. For a product lead deciding on tooling, Notion’s proposition is an integrated knowledge base with an AI brain that knows your company’s stuff. The trade-off is that the AI is mostly confined to answering questions or generating content in Notion; it’s not designed to be a general assistant for, say, sending an email or scheduling a meeting outside Notion.

Perplexity’s Comet – An Independent AI Agent with Web and App Superpowers

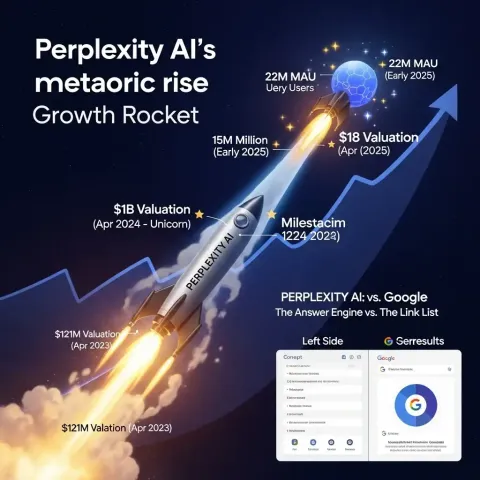

At the cutting edge of assistant technology is Perplexity AI’s “Comet”, which takes a more agentic computing approach. Perplexity began as an AI-powered answer engine (like an AI search engine), but with Comet (launched mid-2025) it reimagined the web browser as an AI assistant you can converse with anywhere. Comet is essentially a browser with a built-in AI copilot that can see the content of web pages, control the browser, and integrate with user accounts to perform tasks[25][26].

Perplexity’s approach to connectors is notably ambitious: it offers a Gmail and Google Calendar connector, as well as connectors for tools like Notion, GitHub, and more[27][28]. Once you enable, say, the Gmail/Calendar connector, the AI can query your emails and events, and even act on them[29][30]. For example, you can ask, “Summarize the emails I received yesterday and highlight any that need my attention,” and the assistant will read through your inbox and produce a digest[31]. You could follow up, “Send a polite follow-up email to the client who hasn’t replied yet,” and if using Comet’s full capabilities, it can actually draft and send that email on your behalf[32]. Similarly, it can check your calendar and list your upcoming meetings, and even schedule events via natural language commands[33][34] (e.g. “Create a 1-hour meeting next Wednesday at 9am for project planning” – and it will add that event to Google Calendar).

The user experience with Comet is quite different from Copilot or Duet. Comet’s AI lives in a sidebar of the browser and can be summoned on any webpage. Because it’s a browser, it has a broad view – it can combine web search with personal data. For instance, it could answer, “Who is the person I’m meeting tomorrow?” by pulling your calendar event (finding the name) and then searching the web or LinkedIn for that person to give you a quick bio. It essentially functions like an AI agent that can operate web services and your own services in tandem. The assistant’s ability to control the browser is a standout feature: if an API call fails (say, it can’t get your emails via the official API), it will literally navigate your open Gmail tab and read the page content like a human would, then extract what’s needed[35][36]. This “if all else fails, emulate the user” approach, while less efficient, shows how far the agent will go to complete a task.

Perplexity’s platform strategy is about being an independent layer on top of everything. Unlike Microsoft or Google, Perplexity isn’t tied to an OS or a productivity suite – it aims to be the one assistant you use regardless of platform. It supports multiple connectors (Google and Microsoft accounts, for example) and works on Mac or Windows via its own browser. In exchange for that neutrality, it comes at a premium (their “Perplexity Max” plan) and is for now a power-user tool – an advanced tech consumer’s AI sidekick. For enterprise leaders, Perplexity demonstrates what’s possible when you let an AI off the leash: genuine cross-app automation. But it also highlights the risks – giving a third-party AI broad permissions requires trust. There have even been security studies (e.g. on “CometJacking”) pointing out how a malicious prompt on a webpage could trick the assistant into unintended actions if safeguards fail[37][38]. This underscores why Microsoft and Google are taking a more step-by-step approach in enterprise settings.

In summary, Microsoft’s Copilot connectors, Google’s Duet AI, Notion’s AI, and Perplexity’s Comet all share the goal of making our digital lives more connected and our tasks more automated, but they execute it differently:

- Microsoft Copilot: OS-level integration, bridging Microsoft and Google worlds, focused now on unified search and content generation within the Windows experience. Strategy: keep Windows central by accommodating other ecosystems, aiming for broad adoption.

- Google Duet (Gemini): App-specific AI embedded deeply in Google’s ecosystem, delivering context-aware help in each Workspace app. Strategy: enhance the value (and lock-in) of Google Workspace, with cutting-edge models to ensure best-in-class AI capability within those bounds.

- Notion AI: Workspace knowledge assistant pulling in external data, oriented around knowledge retrieval and writing in Notion. Strategy: make Notion the hub for work by leveraging AI to connect dots across tools – but focused on enhancing Notion’s role rather than doing arbitrary external actions.

- Perplexity Comet: A stand-alone AI agent with broad powers – web search + personal app integration + the ability to act (send emails, schedule events) in one interface. Strategy: appeal to users who want an AI “butler” that works across everything, showcasing the future of agentic computing albeit with cutting-edge risks and costs.

High-Value Use Cases Enabled by Cross-App AI Assistance

Why do these connectors and integrations matter? The real-world use cases illustrate how AI assistants can save time, reduce friction, and even uncover new insights by virtue of seeing the bigger picture across our apps. Here are some of the highest-value scenarios for both enterprise and individual users:

- Unified Search and Information Retrieval: Perhaps the most obvious win is doing away with siloed searches. Instead of separately querying Gmail, then Google Drive, then Outlook, you can ask one question and get a consolidated answer. For example, an executive could ask, “Find all documents and emails related to the Q3 budget across my accounts,” and Copilot or Notion AI could pull a list of files from OneDrive/Drive and emails from Gmail/Outlook that match[5]. This not only saves time but can surface things you might miss if you forgot to search a particular repository. It’s like having a personal Google that indexes your world of work. In enterprises, employees waste countless hours seeking information; an AI that serves as an enterprise search concierge is immensely valuable.

- Summarization of Emails and Documents: Many of these assistants can read lengthy content and give you a summary. Copilot or Duet can summarize a multi-paragraph email thread in seconds – useful for getting the gist of an email chain without reading every message. Google’s Duet does this in Gmail with “summarize this thread” for long email exchanges, and in Chat it auto-summarizes missed conversations[39]. Perplexity’s assistant can summarize a long email or even multiple emails on the same topic[40]. This is crucial for busy professionals: imagine starting your day and asking, “Copilot, summarize all unread emails from last night,” and getting a concise briefing. Similarly, summarizing documents – Notion AI can summarize a connected PDF or a Slack thread, Google’s Duet can summarize a Docs file or a transcript. Summaries help in digesting information faster, and when combined with search, you can even do things like “summarize all files about Project X” to quickly glean collective knowledge.

- Meeting Preparation and Follow-ups: Tapping into calendar and email data allows AI assistants to become powerful meeting aides. With connectors, one can ask, “What do I need to know for my meeting with Acme Corp tomorrow?” A capable assistant (especially one like Perplexity or potentially Copilot in the future) could check your calendar for meeting details, then pull the latest emails with that client, recent documents or proposals, and perhaps the LinkedIn profile of the attendees – all distilled into a prep brief. In fact, Perplexity’s example queries include “Who am I meeting with this week? Write bios.”[41], which shows the AI gathering names from the calendar and fetching relevant info. After the meeting, the AI could help draft a follow-up email or even auto-generate meeting notes if given a transcript (Google’s Duet in Google Meet already promises “auto notes and action items” for meetings[42]). For enterprise users, these capabilities mean less manual legwork around meetings – the AI can become a junior chief-of-staff, ensuring you’re informed going in and that outcomes are documented coming out.

- Cross-application Task Automation: As AI assistants mature, they’re starting to carry out multi-step tasks that span apps. We see early glimmers of this in Perplexity Comet – e.g., it can find a particular email and then draft a response and send it, all through one interaction[30][32]. Consider the workflow of processing a customer support request: an AI could identify an email from a client, pull up related orders from a database (via connectors or plugins), and draft a personalized response, maybe even create a follow-up task in a project management tool. Microsoft’s and Google’s current integrations are more about assistive steps (find this info, draft that content), but the trajectory is clearly towards automation: Copilot creating documents on command[43], or Duet updating a spreadsheet based on data it summarized from emails. Notion’s vision of AI Agents hints at automating routine tasks inside the workspace (like updating project statuses or triaging bug reports with AI actions)[44][45]. The highest-value scenario here is freeing up humans from “swivel-chair” work – the repetitive toggling between apps to move information around or execute menial actions. Instead, you delegate to the assistant.

- Prioritization and Decision Support: With an overload of information, just finding or summarizing isn’t enough – we often need help deciding what matters. AI assistants can leverage connectors to provide insights and prioritization. For instance, Perplexity’s assistant can identify “urgent emails from this week”[31], not just summarize all emails. It can determine which messages likely require your attention first (perhaps by looking for certain keywords, sender importance, or deadlines mentioned). Copilot might soon be able to answer, “What are the highest priority tasks I’ve committed to in emails?”, which would involve scanning your communications for promises or deadlines. These kinds of higher-order answers are extremely valuable for personal productivity and for managers juggling lots of inputs. By integrating with calendar, email, and task tools, an AI could even proactively suggest, “You have back-to-back meetings today, and 5 emails flagged important – do you want a summary of each and a draft response ready by noon?” This shifts the assistant from reactive query responder to proactive partner, which is the ultimate goal.

- Content Creation and Multi-modal Output: Finally, a use case enhanced by connectors is richer content creation. Microsoft Copilot’s ability to generate Office documents from a prompt[7] means you can effectively say, “Using the data in this spreadsheet and the notes from that email, create a PowerPoint presentation,” and watch a first draft materialize. Google’s Duet already lets you do things like, “Take this Docs outline and make it a Slides deck”, automatically populating slides[12]. That’s cross-app magic happening via AI. Connectors could feed the AI with content from different sources to be merged or transformed. Even multi-modal aspects come in: Duet can generate images to illustrate a slide; Copilot in Windows has been experimenting with Vision features (like analyzing what’s on your screen or images you provide)[46][47]. We can foresee a scenario where you might tell Copilot, “Create a report in Word with charts from Excel file X and include relevant excerpts from PDF Y (in my Google Drive),” and get a synthesized document. This kind of orchestration of content across formats and apps is complex but incredibly high-value for accelerating work.

In all these use cases, the common thread is convenience and cognitive uplift. The AI connectors save you from hunting, from reading voluminous text, and from doing repetitive manipulations. They allow you to focus on higher-level decision-making while the assistant handles the grunt work of gathering and preparing information. For product leads and tech-savvy users, these are not just gimmicks – they change how one allocates time. Instead of spending the first hour of your day searching and triaging, you could spend it acting on insights the AI has already pre-digested for you.

Broader Implications: Toward Agentic, Multimodal, Assistant-Based Computing

Microsoft’s move to integrate Gmail, Drive, and Calendar into Copilot is one more step toward a future of agentic computing – where software agents take initiative to help users, rather than waiting for explicit, low-level commands. It also underscores a shift in user experience design: from app-centric to assistant-centric interactions. Let’s reflect on what these trends could mean going forward:

- Agentic Computing: The term refers to AI systems that can act as agents on our behalf, making autonomous decisions or performing tasks with minimal guidance. Today’s connectors still mostly respond to direct prompts (“find this,” “summarize that”). But by wiring AIs into all our data and tools, we are laying the groundwork for much more proactive agents. If you grant an AI access to your calendar, email, files, tasks, etc., you can envision it eventually doing things like auto-scheduling your week based on priorities it infers, or handling small email replies on its own (with your occasional oversight). Notion’s introduction of AI Agents that can run for a period to handle routine tasks is an early example[22]. Microsoft and Google haven’t gone fully autonomous (likely for reliability and trust reasons), but even Copilot now has features like suggesting actions based on screen context, and it could evolve to quietly organize information for you in the background. The connectors are a necessary piece for agency – an agent can’t do much if it’s blind to half your life. Now that Copilot can “see” across systems, the next step is letting it decide in bounded ways how to assist without being asked each time.

- Multi-modal Interaction: The assistants are increasingly multi-modal, both in input and output. “Multimodal” here means handling text, voice, images, perhaps video or other formats. Microsoft, for instance, has talked about Copilot Vision, where the AI can “see” your screen or images you share and understand them[48]. Being able to take a screenshot and ask Copilot, “What is this error message about?” or “Summarize the chart on this page,” adds a visual modality to the interaction. Google’s Gemini model is expected to be highly multimodal, likely allowing Duet to analyze images or even generate video in the future. Voice is another modality: we already talk to Siri/Alexa, and we might soon be voicing complex requests to Copilot on our PC or Duet on our phone (Perplexity’s mobile app already supports voice queries to its AI). For product design, this means the assistant might manifest not just as a chat box, but as a voice in your earbuds during a meeting (“Your AI whispers: you discussed a similar issue last month, want me to pull up those notes?”) or as an augmented reality overlay highlighting information. The connectors amplify multimodality by providing more types of content (images, calendar timelines, etc.) for the AI to reason over and present.

- Assistant-Based UX Paradigm: We are on the cusp of a paradigm shift where the primary interface is not a collection of apps and menus, but a conversation with an intelligent assistant. This doesn’t mean apps disappear, but how we navigate them could fundamentally change. Microsoft’s approach hints at this: Windows Copilot sits over everything, so instead of clicking through folders or menus, you might increasingly just ask Copilot to do it. Google still surfaces its AI within apps, but even Google is experimenting with assistant as a front-end (e.g., Bard and Gemini as entry points to services). As these assistants become more capable, users will come to expect that any task can start with a simple request: “draft this, fetch that, show me those, update this.” The UX challenge for developers is to integrate their products with this assistant layer – possibly via APIs or connectors – so that their functionality is accessible through natural language and not just button clicks.

For product leaders, the implication is clear: AI assistants are becoming the new OS in a sense – a meta-layer that coordinates apps. Companies should consider how their tools can plug into Copilot, Duet, or others, because if your app’s data or actions aren’t accessible to the AI, your app might be overlooked by users who increasingly rely on the assistant for interaction. Microsoft’s and Notion’s connectors, or OpenAI’s plugin ecosystem, provide pathways to integrate. This also raises questions of standards and openness. Will we see a world of many proprietary connectors (one for Microsoft, one for Google, one for Notion, etc.), or will there be common protocols so any assistant can talk to any app securely? For now, it’s fragmented, but market pressure could force more open interoperability – especially if enterprises demand it.

Another implication is privacy and trust. With great power (to read all your emails/files) comes great responsibility. Each player is addressing this: Microsoft emphasizes it’s opt-in and user-controlled; Google tries to keep data grounded and not used to train models (Duet answers are supposed to be your data, not general knowledge); Notion explicitly states they don’t use customer data to train models and respect permissions[49]; Perplexity touts enterprise-grade encryption and admin controls[50]. Still, there’s a leap of faith users and organizations must take to let an AI roam across sensitive information. The assistant-based UX will only succeed if these systems prove reliable and secure. A hallucination in a casual context is one thing; an AI incorrectly summarizing a legal document or mis-sending an email could be a serious issue[51]. The road to agentic computing will require not just smarter models, but robust guardrails, auditing of AI actions, and likely new user training (“AI literacy”) so that people know how to supervise their assistants effectively.

In terms of leadership and strategy, those making product or tooling decisions should view these AI assistants not as flashy demos, but as productivity tools that can either supercharge an organization or, if ignored, leave it lagging. We’re past the phase of trivial AI chatbots – this is becoming an infrastructure for work. Forward-thinking teams are already piloting Copilot or Duet to handle internal knowledge management, seeing how much time can be saved in support, coding, documentation, etc. The competitive advantage of using these tools thoughtfully (with policies in place to handle confidentiality and verification of AI outputs) could be substantial. Likewise, businesses building software should consider integrating AI assistance to remain relevant in an assistant-driven UX world.

Conclusion: Insights for the Road Ahead

Microsoft’s introduction of Gmail/Google Drive/Calendar connectors in Copilot is more than just a convenience feature – it’s a strategic marker in the evolution of personal computing. The lines between platforms are blurring at the AI layer: productivity assistants are aggregating our digital lives in service of helping us work smarter. Microsoft, by embracing third-party integration, is positioning Copilot (and by extension Windows) as the central hub for a user’s productivity, regardless of source. This raises the bar for competitors: Google will need to ensure Duet AI offers equally powerful cross-context assistance within Workspace (and perhaps eventually beyond it) to keep users glued to its platform. Smaller players like Notion and Perplexity demonstrate that innovation is alive and well – they’ve pioneered features (like autonomous task agents and full web integration) that even the tech giants are now following.

For product leaders and advanced tech users, the key takeaway is to prioritize insight and practical relevance over hype. Yes, terms like “agentic computing” sound buzzworthy, but the practical benefits – unified search, automatically generated briefs, fewer missed emails, quicker content creation – are very real and attainable today. It’s wise to pilot these capabilities with clear success criteria: e.g., does using Copilot connectors reduce project research time by X%? Does Duet AI cut down the time spent drafting routine emails? Does Notion AI help new team members find information without pestering colleagues? Use those insights to guide adoption. Also, keep an eye on user experience: introducing an AI assistant into workflows requires change management. Some users will need training to trust and effectively use the assistant; others might overtrust it, so guidelines on verification are important.

In the bigger picture, we are likely heading toward a world where your primary digital assistant travels with you across devices and applications, orchestrating your intents. Whether it’s named Copilot, Duet, Siri, Alexa, or something else, the concept will be similar – an ever-present conversational layer that mediates your interaction with technology. The new Gmail/Drive connectors in Microsoft Copilot hint at a future where such an assistant is truly agnostic, caring less about who made the app and more about how it can get the job done for you. It’s an exciting prospect for those willing to embrace it, and it places us at the frontier of a long-envisioned computing ideal: technology that works for us in a proactive, personalized, and intelligent way, rather than just passively waiting for instructions.

The journey has just begun, but the directions are clearer than ever. Leaders should watch these developments closely, experiment boldly but thoughtfully, and always tie them back to the core question: Does this help people and organizations achieve what they value more effectively? If the answer is yes – as it increasingly will be – then integrating AI assistants like Copilot (and its connectors) is not just a tech upgrade, but a strategic imperative for the modern workplace[52]. The competitive edge, after all, will belong to those who figure out how to make human-AI collaboration a natural, productive part of everyday work.

[1] [3] [4] [7] [9] Copilot on Windows: Connectors, and Document Creation begin rolling out to Windows Insiders | Windows Insider Blog

[2] [6] [8] Microsoft Copilot Can Now Fly in the Right Seat of Your Google Account

https://www.vice.com/en/article/microsoft-copilot-google-integration/

[5] [43] Copilot on Windows can now create Office documents and connect to Gmail | The Verge

[10] [11] [12] [14] [51] [52] Google’s Duet AI now available in Docs, Gmail, and other Workspace apps | The Verge

https://www.theverge.com/2023/8/29/23849457/google-duet-ai-docs-slides-gmail

[13] [15] [39] Announcing the launch of an enhanced Google Chat | Google Workspace Blog

https://workspace.google.com/blog/product-announcements/welcome-new-google-chat

[16] [17] [18] [19] [20] [23] [24] [49] Notion AI Connectors – Notion Help Center

https://www.notion.com/help/notion-ai-connectors

[21] [44] [45] Everything we launched at Make with Notion

https://www.notion.com/blog/conference-product-releases

[22] Notion 3.0 Introduces AI Agents for Task Automation - Reworked

[25] [26] [35] [36] [40] Comet Browser: A Guide With Practical Examples | DataCamp

https://www.datacamp.com/tutorial/comet-perplexity

[27] [28] [29] [30] [31] [32] [33] [34] [41] [50] Connecting Perplexity with Gmail and Google Calendar | Perplexity Help Center

[37] Agentic Browser Security: Indirect Prompt Injection in Perplexity Comet

https://brave.com/blog/comet-prompt-injection/

[38] CometJacking: How One Click Can Turn Perplexity's Comet AI ...

[42] Duet AI for Google Workspace now generally available

https://workspace.google.com/blog/product-announcements/duet-ai-in-workspace-now-available

[46] Beyond words: AI goes multimodal to meet you where you are

[47] Microsoft Copilot can now read your screen, think deeply, and speak ...

[48] Copilot Vision: Multimodal AI Assistant for Windows That Sees ...