Author: Boxu Li at Macaron

Introduction:

Meta’s December 2025 personalization update marks a pivotal evolution in how user behavior shapes content and ad experiences across Facebook, Instagram, WhatsApp, Messenger, and even wearable devices. With this update, Meta is weaving generative AI chat interactions – text, voice, and even visual prompts – directly into its personalization stackreuters.comtechcrunch.com. In other words, everything you say to Meta’s AI could soon influence what you see on Meta’s platforms. This article dives into the technical architecture and UX integration of this transformation, explaining how AI chat signals are captured, processed, and unified across Meta’s ecosystem. We’ll explore product-layer examples like Meta AI in Messenger, Instagram DMs, and Ray-Ban smart glasses, illustrating how AI chat is becoming a core personalization layer alongside traditional signals (likes, follows, clicks). The result is an ambitious playbook for product leads: AI chat as an intent-rich signal feeding the algorithmic brain of Meta’s feed and ads.

Meta’s apps (Facebook and Instagram mobile UIs shown) began notifying users in October 2025 about upcoming changes. Interactions with Meta’s AI assistant – via text or voice – will soon personalize the content and ads you see, starting December 16, 2025.

From Likes to Chats: AI Conversations as Personalization Signals

For years, Meta’s recommendation engines have learned from your taps and clicks – the posts you like, pages you follow, videos you watch, and so on. The December 2025 update adds a new category of signal: your conversations with Meta’s AI assistantsreuters.com. Whether you’re chatting via text in Messenger or speaking a voice query on your Ray-Ban Meta smart glasses, those interactions will feed into the same algorithms that rank your feed and target your adsreuters.comtechcrunch.com.

The rationale is straightforward: what you ask or discuss with an AI can reveal your current interests or intentions even more explicitly than passive signals. For example, if you ask Meta’s assistant “What are the best hiking trails around here?”, you are clearly signaling an interest in hiking. After this update, Meta can treat that conversational intent similarly to if you had liked a hiking page or searched for hiking gear – and adjust your content accordinglyreuters.comabout.fb.com. Indeed, Meta gives the exact scenario: “if you chat with Meta AI about hiking, we may learn you’re interested in hiking… you might start seeing recommendations for hiking groups, posts from friends about trails, or ads for hiking boots”about.fb.com. In short, AI chat is becoming another first-class input to Meta’s personalization algorithms, on par with social and engagement signals.

From a system architecture perspective, these AI chat interactions are processed through natural language understanding pipelines to extract topical and contextual signals. Both text chats and voice commands are transcribed and analyzed for keywords, entities, and intent. (Voice inputs are converted to text via speech recognition, then handled similarly.) If you share an image with Meta AI – say by using your phone camera or smart glasses to show an item – the visual content can be analyzed by computer vision AI, and the derived insights (e.g. recognizing a product or landmark you inquired about) likewise become part of your interest profiletechcrunch.com. Meta confirmed that voice recordings, pictures, and videos analyzed through Meta AI features will feed into its ad targeting systems under this updatetechcrunch.com. In essence, Meta has instrumented its generative AI features to act as sensors for user preferences.

All these signals are then aggregated into Meta’s broader personalization models. “People’s interactions [with AI] simply are going to be another piece of the input that will inform the personalization of feeds and ads,” explained Christy Harris, a Meta privacy policy managerreuters.com. The company is still building out the full systems to leverage this data, Harris notedreuters.comtechcrunch.com, but the vision is clear: conversational interactions enrich the user model that Meta’s ranking algorithms use to decide what content (and ads) to show you. Over time, as models learn to weight these signals, one can imagine that asking Meta AI about “best 4K TVs” could significantly boost electronics content in your Facebook Feed or trigger TV-related ads on Instagram the next day.

Importantly, Meta is adding these AI-derived signals on top of its existing personalization stack, not replacing itreuters.comabout.fb.com. Traditional signals like your social graph (friends, follows) and engagement history remain foundational. But now, AI chat becomes a new layer that can capture timely, intent-rich data in a way clicks or likes might miss. Meta’s announcement emphasizes that many people expect their interactions to make their feed more relevant over time – and that “soon, interactions with AIs will be another signal we use” to improve recommendationsabout.fb.comabout.fb.com. The update is positioned as meeting user expectations for relevance: if you show interest (even via a private chat with an AI), the system should notice and adapt.

Technical Architecture: Capturing and Processing AI Chat Signals

Under the hood, integrating AI chat signals into personalization required Meta to extend its data pipelines and models. The capture phase begins at the point of interaction: Meta AI is present in multiple contexts (chat threads, voice interface, camera), each producing a log of the user’s query and the assistant’s response. Meta’s systems likely flag the user’s side of these exchanges for analysis. A text query goes directly into natural language processing (NLP) systems; a voice query, as noted, passes through speech-to-text firstabout.fb.comabout.fb.com. If the AI is used in a visual way (for instance, “what do you see in this picture?” via smart glasses), then image recognition models process the visual input, and the interpreted result (e.g. “user showed interest in identifying a café storefront”) becomes a textual meta-data that can be fed into the personalization enginetechcrunch.com.

Next comes the processing and inference stage. Meta’s AI backend will parse the conversation to infer topics or intents. We can think of it as real-time interest extraction: the AI can tag that conversation about “hiking” with an interest in hiking/outdoors category. This likely involves large language models or classifiers that map free-form dialogue to structured interest signals. In fact, building such mapping is part of the ongoing work – Harris indicated Meta is still developing how exactly those AI interactions will improve ad productstechcrunch.com. We do know Meta’s ad system already has taxonomy for interests (e.g. “Hiking & Outdoors” as an ad targeting category). The AI chats provide an explicit clue to slot a user into those categories.

Meta’s privacy disclosures also clarify some boundaries in processing. Conversations with Meta AI that involve certain sensitive topics (e.g. religion, health, sexual orientation, politics) will be excluded from ad targeting usesreuters.comtheverge.com. The system is designed to omit or sanitize signals that could violate policy or cause ethical issues in ad personalization. (Those interactions might still improve content recommendations or simply be ignored; Meta hasn’t detailed if feed personalization will also exclude them, but it likely follows a cautious approach similarly.) Additionally, encrypted personal messages remain untouched – Meta explicitly said chats in WhatsApp or Messenger that are end-to-end encrypted are not processed for this AI signal featuretheverge.com. Only the interactions with the AI assistant chatbot itself (which is a Meta service) count, and even those only if you’ve opted to use it.

After extraction, the integration stage merges these signals into Meta’s personalization models. Meta’s feed ranking and ad delivery systems are powered by machine learning models that take dozens if not hundreds of features about the user and content. Now, a feature like “interest_in_hiking=True (recent AI chat)” could be added to your profile. Meta’s official wording is that AI chat interactions “will be added to existing data such as likes and follows to shape recommendations” for both organic content and adsreuters.com. Practically, this means when the feed generator or ads selector algorithm runs, it will treat your AI-derived interests as part of the input.

One notable aspect is recency and context. A chat about hiking today suggests a current intent, whereas a Page like from 5 years ago might be a stale signal. The AI chat signals could be weighed more heavily for short-term personalization (“in-the-moment” interests). Meta’s Mark Zuckerberg indicated earlier in 2025 that personalization and voice conversations are a key focus for making Meta AI the “leading personal AI”reuters.comtechcrunch.com. This implies the system will strive to immediately reflect what you last discussed with the AI. For example, if you ask Meta AI for Italian recipe ideas this week, you might notice more cooking videos or restaurant suggestions in the following days, aligning with that fresh intent.

Unified Personalization Across Apps via Accounts Center

A major strength of Meta’s approach is the cross-app integration of these AI signals. Meta’s Accounts Center – the feature that links a user’s Facebook, Instagram, Messenger, and optionally WhatsApp accounts – serves as the bridge to unify personalization. According to Meta, if your accounts are linked in Accounts Center, interactions with Meta’s AI on one platform can inform recommendations on anothertheverge.comtheverge.com. For instance, a one-on-one conversation with Meta AI in WhatsApp could later influence what ads or content you see on Facebook, as long as those accounts are connected. In Meta’s words: “We use information, including interactions with Meta AI, across Meta Company Products from the accounts that you choose to add to the same Accounts Center.”about.fb.com This means if you keep your Instagram and Facebook under a unified account, talking to the AI on Instagram could shape your Facebook Feed too. However, if you kept WhatsApp separate (not added to Accounts Center), then chats with the AI on WhatsApp won’t spill over to Facebook or Instagram personalizationabout.fb.com.

From a product perspective, this cross-app data flow is potent. Meta effectively has a 360-degree view of user preferences across social networking (Facebook), visual discovery (Instagram), private messaging (WhatsApp/Messenger), and even hardware (smart glasses). The AI assistant resides in each of these surfaces, collecting signals in slightly different contexts, but ultimately all funneling into one personalization brain (subject to account linking and regional privacy rules). It’s not hard to imagine scenarios: You ask the Meta AI in Messenger for vacation ideas – next time you open Instagram, the Explore page might show travel reels, or a hotel ad might appear in your feed. Or you use Meta AI on your Ray-Ban glasses to ask “What kind of bike is that?” upon seeing a cyclist – later, Facebook might suggest cycling groups or show an ad for an e-bike brand. Meta is turning its entire family of apps and devices into a cohesive personalization network, with AI interactions as the connective tissue.

It’s worth noting that this strategy is partly constrained by privacy regulations in some regions. The rollout of AI-informed personalization is not initially happening in the European Union, UK, or South Koreareuters.comtheverge.com, likely due to stricter data laws and the need for clearer user consent. But in most other markets, Meta is moving full steam ahead with cross-platform data fusion. For users and product stakeholders, it means the traditional silos between apps are blurrier – your “assistant persona” travels with you across experiences. Meta’s Accounts Center effectively becomes the hub of your AI-enhanced interest profile.

Product Layer Examples: Where AI Chat Meets UX

To understand how these AI chat signals are collected in practice, let’s look at how Meta has embedded the AI across its products:

- Meta AI in Messenger and WhatsApp: In these messaging apps, Meta AI exists as a chat contact – essentially a built-in chatbot you can message anytime. Launched broadly in late 2023, the assistant can answer general questions, provide recommendations, or just chit-chat. Every time a user opens this chat and types a query (or taps the microphone icon to dictate a question), that interaction is a new data point. For example, a user might ask in Messenger, “Hey Meta, what kind of camera should I buy for vlogging?” That text query is gold for personalization: it reveals an interest in cameras and content creation. According to Meta, any one-on-one conversations with Meta AI in apps like WhatsApp and Messenger will be used to serve you ads or recommendations on another platform (if linked) under this updatetheverge.comtheverge.com. From a UX standpoint, chatting with Meta AI doesn’t feel like using a search engine or clicking an ad – it’s more natural language and user-initiated. But behind the scenes, that chat could later translate to seeing camera ads on Instagram. Product leads should note how Meta transformed a utility feature (AI Q&A in chat) into a stealth feedback loop for the recommendation system. The user experience remains one of helpful assistance, while in the background Meta’s models treat it as an explicit feedback survey of your interests.

- AI interactions on Instagram (e.g. DMs and Threads): Instagram integrated Meta AI into its Direct Messages, allowing users to chat with the assistant similar to Messenger. Imagine an influencer messaging Meta AI for creative ideas or a teen asking for homework help – those topics could shape what appears on their Instagram Explore or which ads they encounter while scrolling Stories. Moreover, Instagram is testing an AI chatbot in group chats and as a creative tool (like generating image stickers or photo captions). All these creative or conversational prompts on IG are new signals. If a user engages the AI to generate an image (Meta has a tool called “Imagine” for AI image generationtechcrunch.com), even the text of that prompt can reveal preferences (e.g. prompting for “vintage 90s fashion style image” hints at a nostalgia or fashion interest). The TechCrunch report noted Meta may use data from its AI image generation product (called “Imagine”) and an AI video feed (“Vibes”) to target adstechcrunch.com. So if someone’s creating a lot of anime-style AI images via Meta’s tools, they might start seeing more anime or art supply content targeted their way. Instagram’s core UX is visual and discovery-focused; by tying AI creation tools into it, Meta gains insight into creative interests that weren’t easily captured before.

- Ray-Ban Meta Smart Glasses (Voice and Vision): In September 2023 and again in 2025, Meta launched smart glasses in partnership with Ray-Ban that integrate the Meta AI assistant via voice. These glasses allow wearers to ask questions out loud (with a “Hey Meta” voice command) and even get answers in audio. Critically, the latest generation includes a camera and the ability for the AI to identify or describe what you’re looking at. For example, you can snap a photo of a landmark or scan a product barcode and ask Meta “What is this?” or “How much does this cost online?” The voice recordings and visual analysis from the glasses are explicitly mentioned as inputs for ads in the new policytechcrunch.com. That means if you use your smart glasses to, say, look at a restaurant and ask Meta AI for reviews, Meta logs that you showed interest in that restaurant or cuisine. Later, your feed might suggest a food festival or you might get an ad for a dining app. The UX here is seamless – you interact with the world, and the glasses’ AI helps you – but in doing so, you provide Meta a window into your physical-world interests. The glasses effectively translate visual experiences into digital signals. A user who frequently asks their glasses for “nearby coffee shops” is pretty likely to see a Starbucks coupon in their Facebook feed before long.

Illustration: Meta’s integration of AI across devices and apps means even a casual chat or a voice query can become a personalization signal. Meta’s ecosystem (Facebook, Instagram, WhatsApp, Messenger, Ray-Ban smart glasses) now acts as a unified network, constantly learning from both your clicks and your conversations.

- Meta AI in Group and Community Contexts: Beyond one-on-one chats, Meta is also exploring AI in more social contexts. Facebook Messenger, for instance, allows AI assistants (including themed personas) to be invited into group chats to settle debates or generate content. While details are sparse, one could imagine that if in a group chat someone asks the AI “What’s a good movie to watch?”, that could later shape their Facebook Watch recommendations. Facebook groups themselves might eventually have AI helpers, and those interactions (like asking an AI in a cooking group for a recipe) would feed into that individual’s profile. Meta’s longer play is integrating AI into feeds directly – e.g., an AI-curated video feed called “Vibes” was launched for AI-generated short videostheverge.com. User behavior there (which AI videos you watch or remix) becomes another signal loop. These examples show that AI isn’t just a separate chat app – it’s woven into the social fabric of Meta’s products. Each touchpoint is instrumented.

AI as a Core Personalization Layer

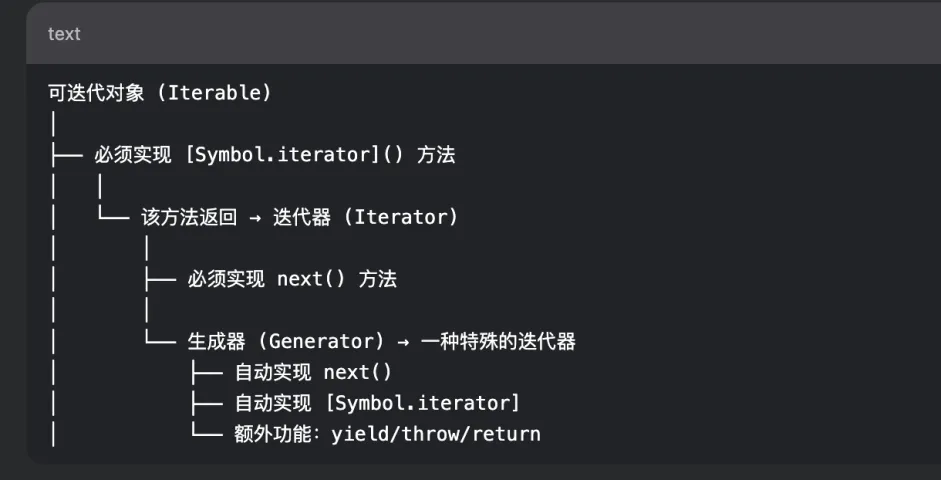

Bringing AI chat signals into the personalization stack effectively creates a new layer atop Meta’s classic ranking signals. Let’s consider how the layers stack up now:

- Social Graph Layer: Who you follow or befriend has traditionally determined baseline content (friends’ posts, followed Pages’ posts).

- Behavior Layer: What you engage with (likes, shares, comments, dwell time) informs recommendations of similar content or ads. This is the realm of collaborative filtering and predictive models – if you liked X, you might like Y.

- Contextual & Demographic Layer: Basic info like your location, age, device, as well as contextual signals like time of day or trending topics, which influence what content is considered relevant at the moment.

- AI Chat Layer (New): What you explicitly tell the AI about your needs, questions, or curiosities. This layer is highly intent-focused – it’s almost like asking the user “what do you want to see?” but in a natural way. It provides direct interest indicators that don’t have to be inferred via multiple clicks; the user’s own words describe their intent.

Meta is essentially elevating this AI chat layer to be on par with the others. In Meta’s view, these interactions “will soon” be part of the signals used for “all of our platforms” to improve recommendationsabout.fb.com. Mark Zuckerberg underscored this at the 2025 shareholder meeting, saying Meta aims to make Meta AI “the leading personal AI” with a big emphasis on personalization and voice conversationstechcrunch.com. It’s a strong hint that Meta sees voice and chat inputs as integral to how future users will shape their feed, possibly even more than clicking buttons. In essence, AI chat is being treated as a first-party data source for personalization, just like your on-platform likes and posts.

We can draw a parallel to search engines: typing queries into Google has long been an intent signal for Google’s ad targeting and content suggestions. Meta is now getting its own “query stream” via chats, without having a traditional search engine. Instead of search keywords, Meta gets conversational queries. From a product lead perspective, this is Meta building an intent harvesting mechanism native to its platforms. Users no longer have to leave to search for things – if they ask Meta’s AI, Meta captures that intent internally and can immediately close the loop by showing a relevant product or community on its own apps.

User Experience and Design Considerations

Integrating AI chat as a personalization signal has required careful UX communication. Meta had to inform users about this change – an unusual step showing its significance. Starting early October 2025, users received in-app notifications and emails explaining that their interactions with Meta’s AI may be used to personalize content and adsabout.fb.comabout.fb.com. The notification (as shown above) makes it clear that this is an AI-driven improvement to recommendations, and it gives users time (till Dec 16, 2025) to understand or adjust. Meta has also highlighted controls: users can adjust their Ads Preferences or use feed controls to influence what they seeabout.fb.comabout.fb.com. However, they cannot opt out of this use of AI chats short of not using the AI features at alltheverge.comtechcrunch.com. This design choice – no opt-out – underscores how core Meta believes this is to their product experience. If you engage with their AI, it’s implicitly part of the personalization contract.

From a UX integration standpoint, Meta is attempting to make these AI interactions feel native and beneficial. The assistant is portrayed as making the user’s experience more convenient and fun (helping accomplish tasks, entertaining, etc.), while invisibly tuning the algorithm in the background. Ideally, users just notice their feeds getting more relevant to things they care about, without feeling “creeped out” that “Facebook showed me boots right after I asked the bot about hiking.” Meta’s messaging in the Privacy Center assures that microphone access on voice AI is only when permissioned and activeabout.fb.com, trying to preempt any notion that it’s “always listening” (a concern that often plagues voice assistants). The challenge for product design is ensuring users see the AI as a trusted buddy rather than an intrusive spy. Meta is navigating this by transparency (notifying users) and by allowing some review of ad interests (users can see and remove topics in Ad Preferences, including those gleaned from AI chats presumably).

Finally, we should touch on performance and iteration. Meta indicated it’s “still in the process of building the first offerings that will make use of this data.”reuters.com Initially, the effects might be subtle or limited. Over 1 billion people use Meta AI monthly as of late 2025reuters.comabout.fb.com, generating a massive volume of conversations. It will take time (and machine learning experiments) to fully integrate that firehose into the recommendation engine effectively. We can expect continuous tuning – Meta’s AI models learning how much weight to give a chat signal versus a click, how to decay the influence of an old conversation, how to handle off-topic chat, etc. On the product side, new features will likely emerge too: e.g., if Meta notices you asked the AI something that it can’t directly answer by itself, it might proactively show you user-generated content on that topic in your feed (“You asked Meta AI about gardening tips – here are some popular gardening groups you might like”). Product leads should anticipate a wave of AI-driven personalization enhancements, essentially blending search/recommendation functionalities. Meta’s personalization stack is becoming more conversational, predictive, and unified across its offerings.

In summary, Meta’s December 2025 update cements AI chat interactions as a core part of the product playbook for personalization. By capturing text, voice, and visual signals from user-AI interactions, Meta enriches its understanding of user intent in real time. The technical architecture ingests these signals much like any other user activity, but with the advantage of direct expression. Through Accounts Center, Meta unifies this across its app family, turning separate products into a single personalization ecosystem. Product examples from Messenger to smart glasses show the breadth of integration – wherever the user can converse or query, personalization gold can be mined. For product leads, this represents an expansion of the traditional feedback loop: no longer are we limited to implicit signals; we now actively leverage what users say they want. Meta’s personalization stack has evolved from simply watching what users do, to listening to what they say. The playbook ahead for Meta and others will likely involve refining these AI interactions, balancing privacy, and continually demonstrating to users that a smarter, more tailored experience awaits as a result. Meta is betting that AI chat will shift personalization from reactive to proactive – and as this rolls out, every like, follow, and now every chat will count in shaping the user’s journeyreuters.comemarketer.com.